The speed at which AI discovers vulnerabilities has surpassed the speed at which it patches vulnerabilities.

On March 27, an unsecured data cache at Anthropic exposed around 3000 internal files. One draft blog post revealed the upcoming new model, Mythos, which Anthropic self-rated as "far surpassing any AI model in cybersecurity capability." On the same day, CrowdStrike and Okta each plummeted 7%, while Palo Alto Networks fell by 6%.

The market's panic is not because a more powerful model has emerged. It's because the creator of this model stated that its progress on the attack side has outpaced the speed at which the defense side can keep up.

AI Cybersecurity Dominance

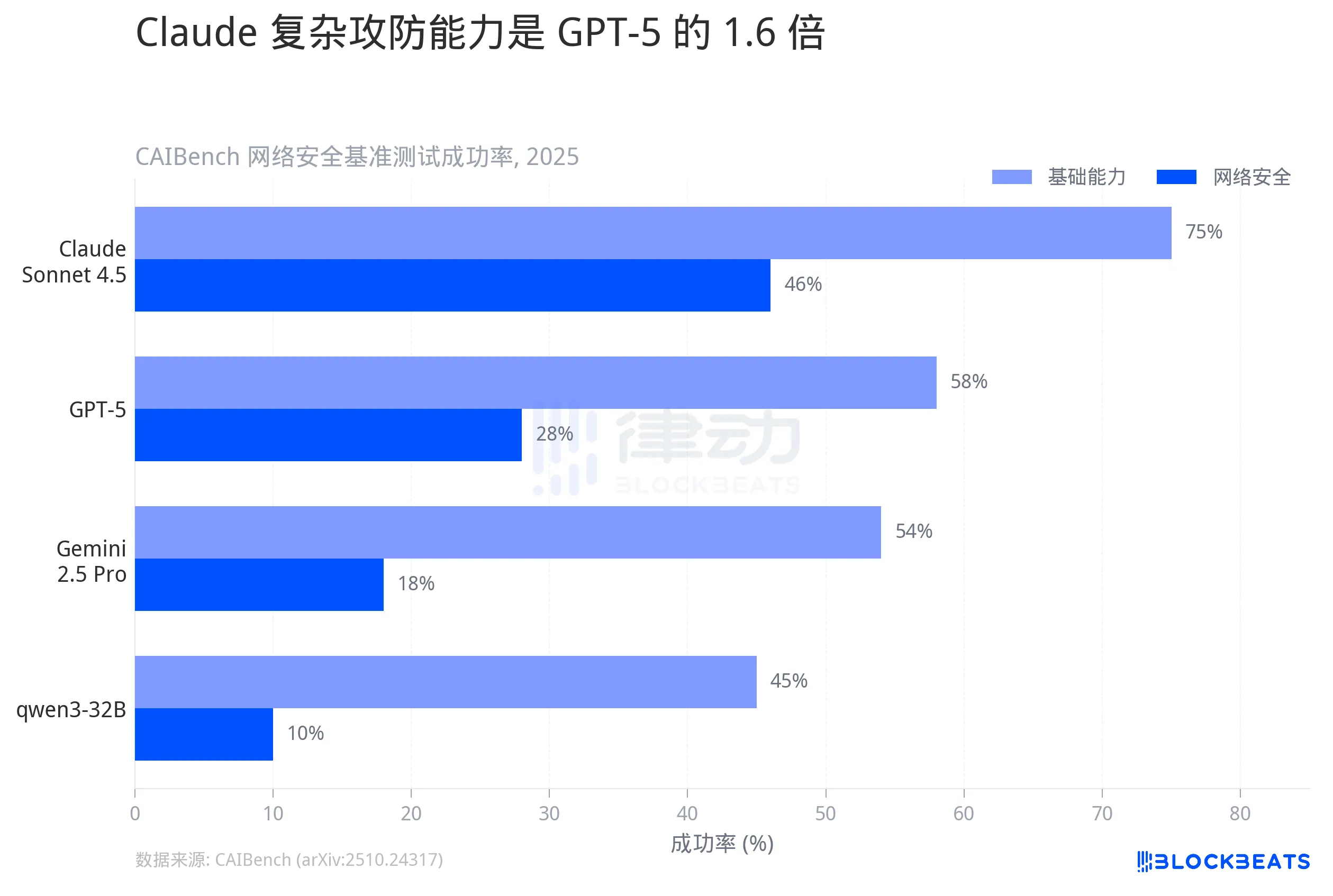

According to the academic benchmark CAIBench's test results, in the Cybench test simulating a real attack-defense environment, Claude Sonnet achieved a 46% success rate. The second-ranking GPT-5 was at 28%, Google's Gemini 2.5 Pro only reached 18%, and the open-source model qwen3-32B dropped even lower to 10%.

While 46% may not seem high, this is the success rate of complex penetration tasks, including steps like vulnerability discovery, building exploit chains, and privilege escalation. In a more basic Base test, Claude's success rate has already hit 75%, nearing its ceiling.

The difference is not in who is slightly better but in magnitude. Claude's complex attack-defense capability is 1.6 times that of GPT-5 and 2.5 times that of Gemini. In this dimension of cybersecurity, the distribution of abilities among models is not a ladder but a gap.

Doubling in 6 Months

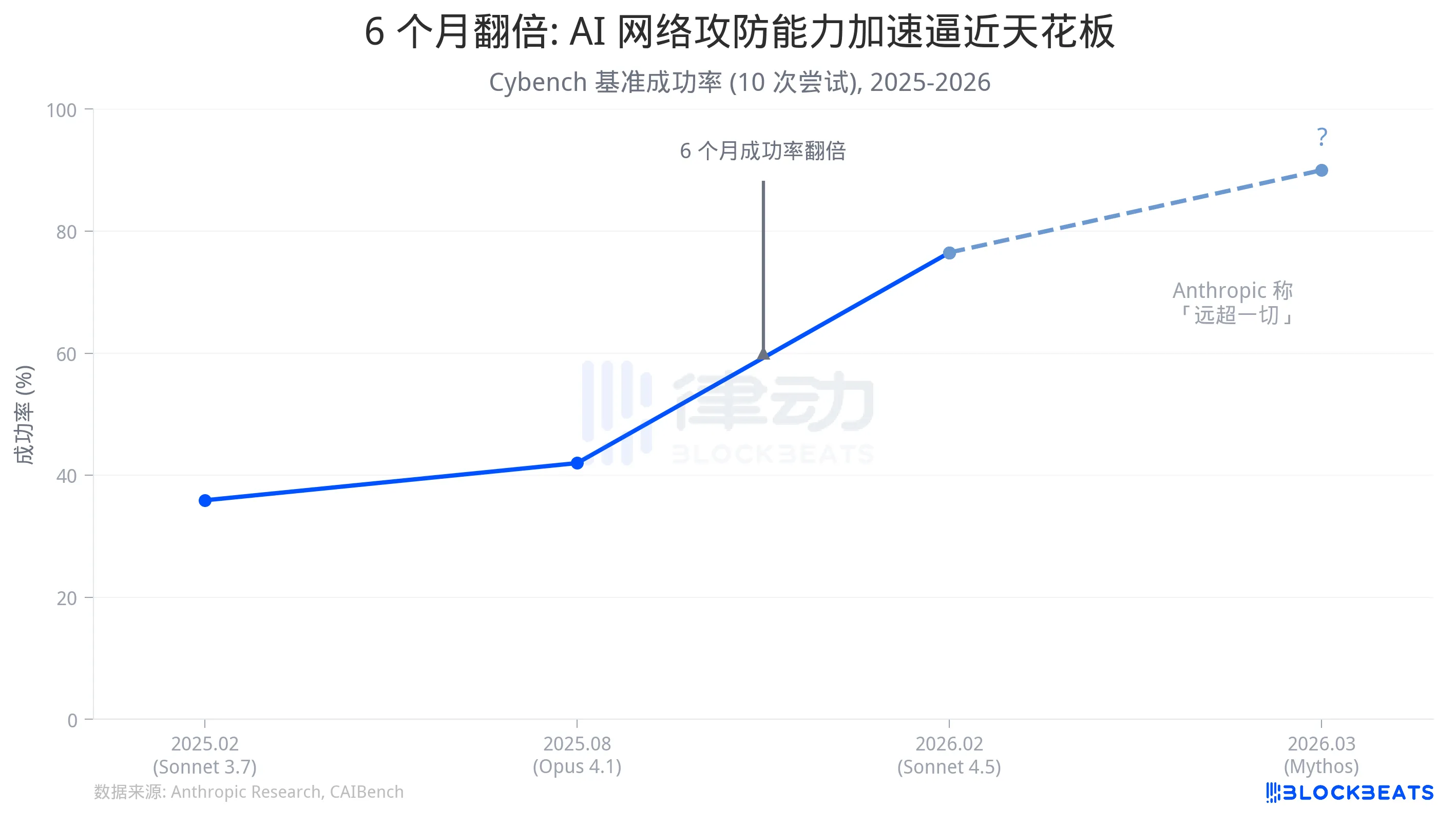

What's more worth dissecting isn't the horizontal gap but the vertical speed.

According to Anthropic's official data, Sonnet 3.7, released in February 2025, achieved a 35.9% success rate on Cybench (10 attempts). In the latter half of the same year, Sonnet 4.5 reached 76.5%. The Anthropic research team's conclusion is: within 6 months, the success rate doubled.

What does this speed mean? In a real-world scenario comparison: Claude Opus 4.6 was used to audit the Firefox codebase in March this year. According to InfoQ, 22 security vulnerabilities were discovered within two weeks, with 14 being high-risk. These vulnerabilities had gone undetected despite years of manual audits and millions of hours of CPU fuzz testing. Anthropic's security team previously disclosed that Claude uncovered over 500 high-risk vulnerabilities in multiple production-grade open-source projects, some of which had been present for decades.

And the industry standard timeline for traditional penetration testing is 2 to 3 weeks, and that's just for one application. According to the Verizon 2025 Data Breach Investigations Report, the median time from public disclosure of a critical vulnerability to mass exploitation by attackers is 5 days, with a median time to patch of 32 to 38 days.

The speed at which AI discovers vulnerabilities is growing exponentially, while human patching speed is linear. The difference in time is the attack window.

In the leaked Mythos draft, Anthropic wrote that this model "heralds a coming wave of models that can exploit vulnerabilities in a way far beyond the defender's efforts." Based on the publicly known capability curve, this is not an exaggeration.

The Faster the Release, the More Urgent the Warning

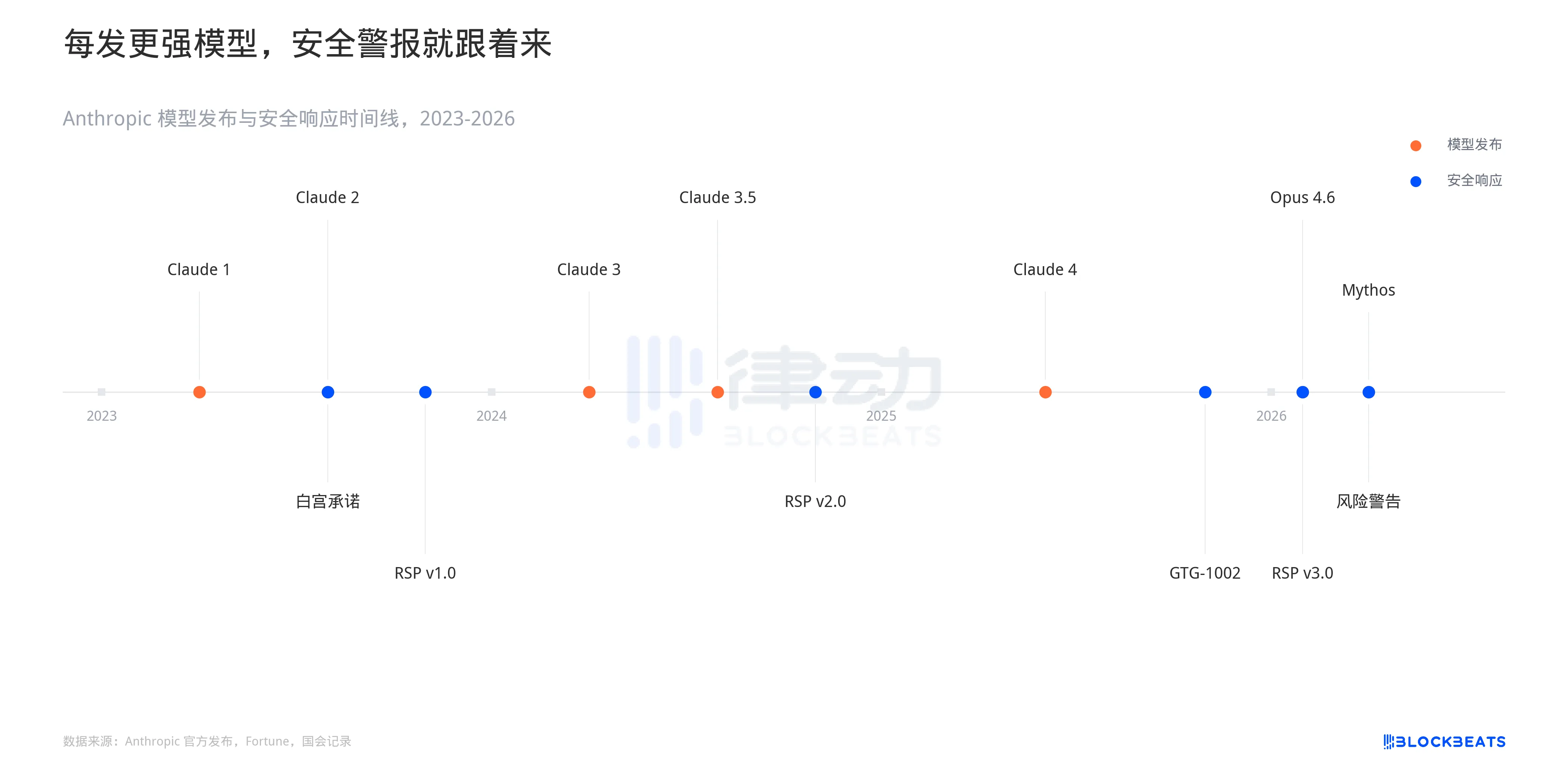

If you put Anthropic's actions over the past three years on a timeline, you will see a clear pattern: every time a stronger model is released, it is quickly followed by a higher level security response.

In July 2023, the White House signed a voluntary pledge, followed by the release of the first Responsible Scaling Policy (RSP v1.0) in September of the same year. In October 2024, the RSP was upgraded to v2.0, adding a threshold for biochemical weapon capabilities. In November 2025, Anthropic disclosed the GTG-1002 incident. A China-backed threat group exploited around 30 organizations using the Claude Code, with AI independently executing 80% to 90% of the tactical operations throughout the operation. This was the first documented large-scale AI-orchestrated inter-organizational espionage campaign.

In February 2026, the RSP updated to v3.0, with the simultaneous release of Claude Code Security. In the same month, the Pentagon labeled Anthropic as a "supply chain risk" because Anthropic refused to lift clauses in the contract prohibiting large-scale surveillance and fully autonomous weapons. A month later, the Mythos leak revealed that Anthropic acknowledged in the draft that this model poses "unprecedented network security risks."

The pace of capability releases is accelerating. There is a one-year gap from Claude 1 to Claude 3, and less than three months from Opus 4.5 to Opus 4.6. Security responses are also accelerating, but they are always reactive: capabilities are exploited first, and policy patches come later. The collective drop in cybersecurity stocks on March 27 is the pricing of this time delta.

A Dark Reading survey earlier this year revealed that 48% of cybersecurity professionals identified AI-powered agents as the top attack vector for 2026. Two years ago, this option was hardly at the top of the list.

Anthropic's Mythos release strategy involves providing early access to defensive organizations, "giving them a first-mover advantage." This statement itself acknowledges the asymmetry of offense and defense. If the defenders do not need a first-mover advantage, it means the attackers have not yet arrived at the doorstep.

You may also like

Polymarket Underlying Algorithm Explained

What do projects born in the crypto bear market do?

a16z founder's Stanford lecture: Whenever Wall Street and Silicon Valley have different ideas, it's Wall Street that ends up being wrong

Michael Saylor: After three consecutive quarters of losses, Strategy will sell Bitcoin to pay dividends

The toll station at Hormuz and the RMB that cannot be bought

Interview with Coinbase Institutional's Strategic Head: The Institutionalization of Crypto Reaches a Critical Point

Dialogue with Agora CEO Nick: The battle for stablecoin licenses has just begun

Morning Report | a16z Crypto completes $2.2 billion fundraising for its fifth fund; Bullish invests $4.2 billion to acquire share transfer agency Equiniti; PayPal's Q1 performance exceeds expectations

a16z Crypto: What We See Behind the $2.2 Billion New Fund

Web3 is dead, Web2+3 should rise

Stablecoins and Latin American Remittances: The Misunderstood $174 Billion Market

The arrival of the Web 3.0 era: A review of Hong Kong court rulings on digital assets

Track Markets At a Glance: New WEEX Price Widgets for iOS & Android

To streamline your market data access, WEEX has officially launched "Market Watchlist" desktop widgets

The billion-dollar lesson: The focus of DeFi security is shifting from code to operational governance

A Brief Analysis of Stablecoin Licenses and On-Chain Funding

BVNK Founder: Three Stages of Stablecoin Development

The truth about Trump's son's Bitcoin game: he made a staggering $100 million while retail investors lost $500 million

What Is Futures Trading? Hours, Platforms, and How to Start Trade Futures(2026 Guide)

Learn how to start futures trading, understand trading hours, and choose the best futures trading platform. Includes real data, strategies, and ways to maximize returns with rebates.